Even Evan You Can't Understand AI-Written Code Anymore — What Should We Do?

The Framework Author Can’t Read His Own Framework’s Code

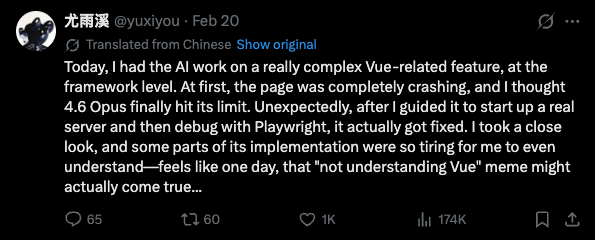

Today I came across a post from Evan You (creator of Vue):

He described how he had AI work on a really complex Vue feature at the framework level. At first, the page completely crashed — he thought Opus 4.6 had finally hit its limit. But after guiding it to spin up a real server and debug with Playwright, it actually got fixed. When he took a close look at the implementation, some parts were so complex that even he found them tiring to understand — and he joked that the “not understanding Vue” meme might actually come true one day.

The impact of this statement lies in who said it — the creator of Vue. His understanding of Vue is probably deeper than almost anyone else on the planet. If even he struggles to follow AI-written Vue code, what about the rest of us?

Are You Actually Reading AI-Generated Code?

This brings up a more practical question: how many people actually review AI-generated code carefully?

Honestly, when I use Claude Code, how closely I review depends on the context:

- Core business logic: I read it carefully, because bugs are on me

- Utility scripts, one-off tasks: A quick skim — if it runs, it’s fine

- Boilerplate, config files: Barely look at it — I trust the AI’s output

I’d guess most people are similar. And as trust in AI builds over time, the “quick skim” zone keeps expanding while the “careful review” zone keeps shrinking.

This isn’t laziness — it’s a natural human response to productivity tools. Just as you wouldn’t read the assembly code generated by a compiler, when AI-generated code keeps running reliably, the motivation to review line by line naturally fades.

Three Trends Already Underway

First, AI writes code differently than humans.

Humans write code with readability, team conventions, and code review in mind. AI doesn’t need to. It optimizes for correctness and completeness, not “comfortable for humans to read.” So AI-generated code often has sound structure but feels off — like reading an essay with perfect grammar but no soul.

Second, complexity is escalating.

Evan You’s example is particularly telling. He had AI work on a framework-level feature — not writing a component or calling an API. AI can already operate at this level, and its solutions may involve implementation paths you’d never have considered. When the solution itself exceeds your cognitive range, “understanding it” becomes a luxury.

Third, debugging is changing.

Notice Evan You’s workflow: AI writes code → page crashes → guide AI to debug with Playwright → fixed. Throughout this process, the human’s role isn’t “writing code” or “reading code” — it’s guiding AI to verify and fix things the right way. This is an entirely different way of working.

So What Should We Do?

I don’t think “insisting on understanding every line of code” is a sustainable strategy. Code volume is exploding, and AI’s output speed far exceeds human reading speed. But not reviewing at all isn’t viable either — at least not yet.

Here’s what I think is pragmatic:

1. Focus on boundaries, not implementation details.

You don’t need to understand every line AI writes, but you need to understand what it does, what the inputs and outputs are, and what it affects. Just like you don’t need to read a third-party library’s source code, but you should know its API and side effects.

2. Invest in testing, not code review.

Rather than spending time reading code line by line, spend time writing good tests. Tests automatically verify whether behavior is correct without requiring you to “understand” the implementation. Evan You’s use of Playwright for debugging follows this exact principle — validate through execution, not visual inspection.

3. Learn “conversational debugging.”

When AI-written code breaks, the most efficient approach often isn’t reading the code to find the bug yourself — it’s describing the problem and letting AI fix it. This sounds like “giving up,” but it’s actually a new skill: you need to describe problems accurately, provide the right context, and guide AI in the right direction.

4. Maintain architectural understanding.

Implementation details can be delegated to AI, but the overall system architecture, relationships between modules, and data flow — these you must understand clearly. Because when AI writes code that’s “correct but unreasonable” in some module, only someone who understands the big picture can spot the problem.

This Isn’t a New Problem

Think about it — this anxiety isn’t actually new.

- Assembly programmers once looked down on code generated by C compilers

- C programmers once distrusted Java’s automatic memory management

- Backend developers once thought ORM-generated SQL wasn’t optimized enough

Every time a new layer of abstraction emerges, it’s accompanied by anxiety about “losing control.” But ultimately, most people choose to trust the abstraction and focus on higher-level problems.

AI writing code may be the next great leap in abstraction. The difference is that this leap might be bigger than any that came before. When Vue’s creator admits he can barely follow AI’s implementation, we may be witnessing a turning point.

Final Thoughts

I’m not sure when the day will fully arrive when most code is incomprehensible to humans. Maybe next year, maybe five years from now. But the direction is already clear.

Rather than worrying, it’s better to adapt early. Transform yourself from “a person who writes code” to “a person who directs AI to write code” — the sooner you start this transition, the better.

After all, even Evan You already works this way.